Why Resident Surveys Are Still Valuable for Finding Out What Citizens Want and Need

By NRC on July 6, 2017

What Makes for Credible Resident Surveys?

- By Thomas Miller and Michelle Kobayashi - Pub. ICMA

"Nobody wants more taxes to fund a homeless shelter!"

"Nobody wants more taxes to fund a homeless shelter!"

"People don't feel safe here anymore!"

"You cannot expect the community to support one-way streets downtown!"

"We need branch libraries out east!"

If you haven't heard self-appointed community spokespeople make these very statements, you've likely heard plenty of other proclamations in public testimony or read countless letters to the editor from residents sure that they speak for everyone.

So much in survey research has changed since we wrote the first version of this story in PM 16 years ago; however, the fundamental uses of surveys have blossomed, making this an expansive era in public opinion surveying.

Surveys are at the heart of public policy, private sector innovation, predictions about social behavior, product improvement, and more. Search "public opinion survey" on Google and you get 10.2 million entries. "Citizen surveys for local government" will get you 32.6 million hits (on 2/22/2017).

We shed more ink in 2001, explaining what a citizen survey was and why it was needed, than we need to do today. The fundamental definition is the same: A citizen survey is a questionnaire usually completed by 200 to 1,000 representative residents that captures the community sentiment about the quality of community life, service delivery, public trust, and public engagement.

You'd be hard-pressed to find a local government manager who has not done a survey, heard a colleague talk about one, or who does not understand it to be a leading practice in local government management.

Changing Times

As interest in resident opinions explodes, the survey industry is fighting to keep up with changes in technology for collecting those opinions. In 2001, we wrote about "Telephone's Unspeakable Problem."

Who answers the landline anymore when an anonymous call sneaks through call-blocking? (Answer: Almost no one.) Who even has a landline anymore? (Answer: Only about 53 percent of households.)

This is why well-conducted telephone surveys are expensive. Today's phone surveys should include cellphone responses from 50 to 75 percent of participants and must avoid connections with children or people who are driving and, according to federal law, calls must also be hand-dialed, not dialed by automated equipment.

Residents' patience for responding to any survey is wearing thin. Response rates have dropped on average from 36 percent by telephone in 1997 to 9 percent today. In fact, response rates by mail or Web are lower today, too.

The good news is that research has shown that despite a low response rate, surveys are gathering public opinion that pretty well represents the target community.

Today, the problem has moved to data collection on the Web. Costs to gather opinions from anyone who wants to click a well-publicized link clearly are much lower than traditional "probability sampling," where residents are invited by the equivalent of a lottery to participate.

Opt-in Web surveys let anyone participate without having to sample invitees, but the angry or the Internet-connected may differ from the typical resident. The problem is that there are yet no ways to know how likely opt-in Web survey responses reflect the opinions of the community.

This uncertainty afflicts resident engagement platforms, too, where anyone can enter and post a comment or vote. The American Association of Public Opinion Research members are fretting and working overtime to learn more about making the Web a reliable part of survey data collection.

Generating Responses

As interest grows in what people think but costs rise and responses fall, something's got to change, and changes are in the making. Sampling based on addresses--rather than telephone numbers or Web links--has come into fashion and old-school mailed surveys continue to garner higher response rates than any other means of collecting citizen surveys. Residents asked to participate by mail or to respond on the Web are invited using traditional random sampling techniques so that results are more credibly representative of the community.

Some companies have created their own national Web panels of survey takers who usually are recruited on the Web and paid to participate (e.g., Harris Interactive, Google, SurveyMonkey). These panels typically are balanced to reflect national demography. In midsize communities, however, there still are too few respondents to generate enough participation, and these paid participants are no random sampling.

Resident surveys can now be completed on handheld devices--texted to respondent smartphones or completed on tablets--though the number of questions tolerated on these small computers remains quite limited.

Social media traffic is now being interpreted by automated text readers that aggregate messages to determine what the public thinks about current events, though some caution that much of what passes, for example on Twitter, is generated by people only 30 percent of the time, with 70 percent created by nonhuman bots.

Other kinds of big data--from transponders affixed to vehicles, cameras aimed at pedestrian high-traffic areas, geographic sensing of cellphone "pings," and Web searches--provide data that can construe human activity and circumstances.

So far, though, these data are not good at measuring resident opinion, and there have been a few big "misses" that have undermined the credibility of some inferences. An example: Google thought (as it turned out, wrongly) that it could predict the loci of flu epidemics faster and more accurately than the Centers for Disease Control and Prevention by using millions of Google searches.

What Makes for Credible Resident Surveys?

When results of a survey are in, the client wants to know: "How confident can I be that the results of the survey sample are close to what all adults in the community think?"

While there is no certainty that the results are close to what you'd get from answers by all residents, effective survey research usually gets it right. Here are some clues that you're getting credible survey research:

The survey report is explicit about the research methods. Questions include: Who sponsored the survey? How was the sample selected? What was the list from which the sample was selected? What percent of the people who asked to participate in fact did participate? Were both households and respondents in households selected without bias? Were data weighted and if so, what weighting scheme was used? Were potential sources of error, in addition to sampling error, reported?

A shorthand for understanding if standards of quality are met is encapsulated in the mark of the American Association for Public Opinion Research's (AAPORs) Transparency Initiative (TI). Research has shown that TI members have done better in election polls than have pollsters that were not members.

The report specifies how potential for non-response bias is mitigated. This is where most reputable organizations weight data to reflect better the demographic profile of the community.

Respondents are selected at random and their candor is encouraged. Responses are anonymous and given in self-administered questionnaires (mail or Web) instead of in person or by telephone.

If data are collected via mail, Web, and phone, there is some adjustment to correct for well-established research that shows those who respond by phone give more glowing evaluations than do those who respond in self-administered surveys.

Ensuring Worthwhile Results

These days, most managers are alert to the core content of citizen surveys. Slowly building now is the connection between the data collected and the actions needed to make those data useful.

As data proliferates, its sheer volume intimidates leaders and, frankly, everyone else who wants to make sense of the information. Here are ways to ensure the survey results you have are worth the time and cost to get them, making the data more useful:

Use benchmarks. It is one thing to know that code enforcement is no rose in the garden of resident opinion, but it's another to find out that your code enforcement stinks compared to others. Comparing resident opinions in your community to those in others adds a needed context for interpreting survey results.

Use benchmarks. It is one thing to know that code enforcement is no rose in the garden of resident opinion, but it's another to find out that your code enforcement stinks compared to others. Comparing resident opinions in your community to those in others adds a needed context for interpreting survey results.

And with the right benchmarks, you now can see what communities of your size, in your geographic locale, and with your kind of resident demography are saying that may be the same or different than what you hear at home.

Track results across time. Not only will benchmarks from other communities help to ensure interpretable results, comparing results this year to results from years past will strengthen your understanding about what has been found. If you've taken the right actions with survey results and other sources of information, your community should be improving, residents should be noticing, and metrics should be reflecting the change.

Examine results in different parts of the community and for different kinds of residents. Average survey results are not the same everywhere or for everyone. If older residents and younger residents don't see eye to eye about the importance of public safety, for example, you should know.

Get data from all the key sectors. Surveys of residents should be augmented with surveys of local government employees, nonprofit managers, and the business community. This way you can compare how each group sees the successes and challenges faced, and you can identify their unique needs.

Go beyond passive receipt of results and broaden the call to action. Conduct action-oriented meetings to identify next steps that the data and your knowledge of the community suggest. In workshops, you can engage not only staff and elected officials but community nonprofits, businesses, and residents to identify a small number of survey-inspired outcomes to achieve within a set period of time.

Get acquainted with how others use their survey data. National Research Center offers insights through the Voice of the People's Awards and the Playbook for community case studies.

Think broadly about where survey results can be used. It's not only about communication. Consider where else you can effect community change that you find is needed through your citizen survey. Understand the "Six E's of Action": envision, engage, educate, earmark (see example below), enact, and evaluate.

Example: One of the Six E's of Action: Earmark

One way to act on survey findings is to use them for budget allocations. Surveys give insight into new programs that could be supported by residents. In Pocatello, Idaho, for example, some passionate residents made a case to city council that the local animal shelter was not habitable even for the most decrepit mongrel.

Councilmembers put a question on Pocatello's citizen survey and more than 85 percent of respondents indicated support for an improved facility for strays. Within a year, and by more than the 66 percent minimum required by law, residents voted approval for a bond issue of $2.8 million and the facility was built.

Featured Image: Tom Miller and Michelle Kobayashi with Trinidad, CO Assistant City Manager Anna Mitchell receiving latest book on citizen surveys.

This article was originally published in Public Management (PM) magazine by ICMA, July 2017.

Related Articles

- 6 Ways Survey Data Can Empower Your Community

- Time to Rethink Performance Measurement

- National Research Center, Inc. and the Transparency Initiative

Popular posts

Sign-up for Updates

You May Also Like

These Related Stories

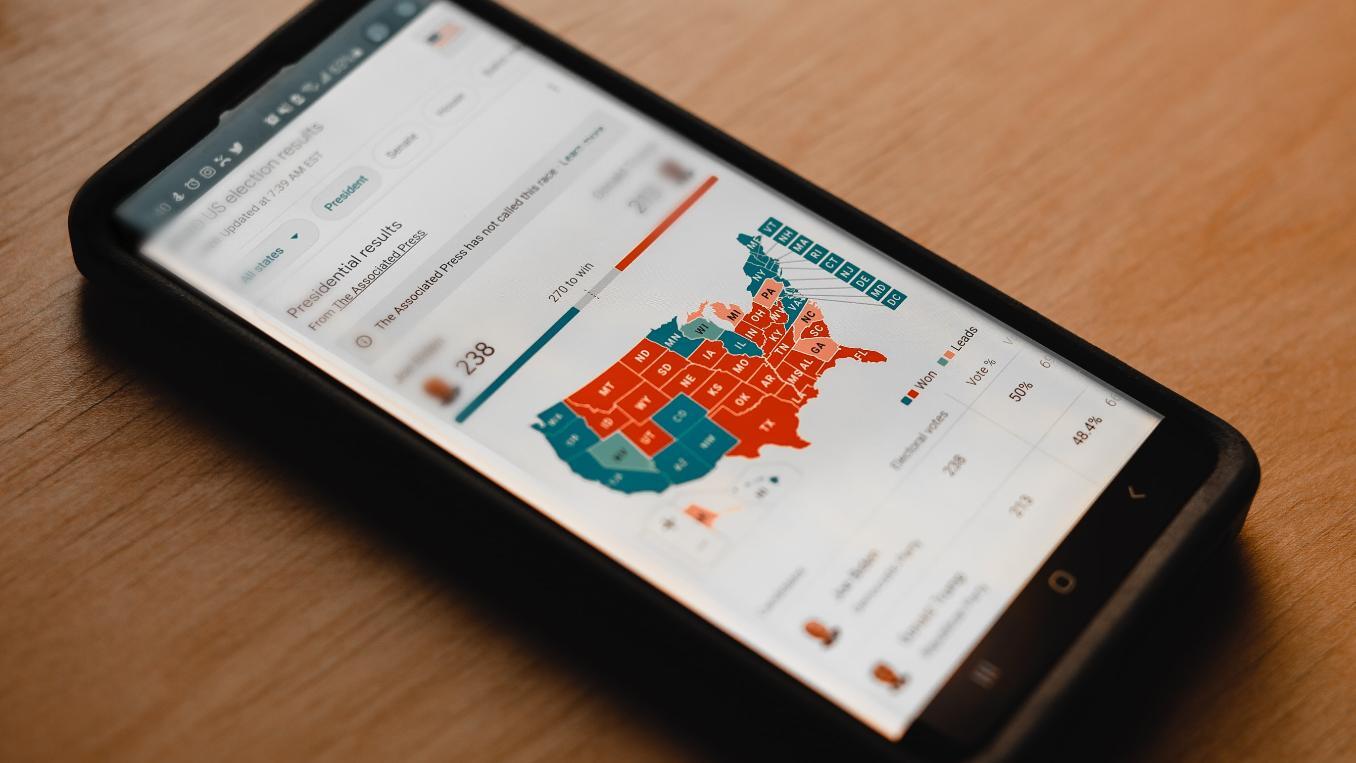

Why Political Polling Is Often Wrong

Old School or New Tech: What Is the Difference with Surveys?