Your Citizen Survey is NOT a Political Poll

By NRC on July 19, 2018

-By Tom Miller-

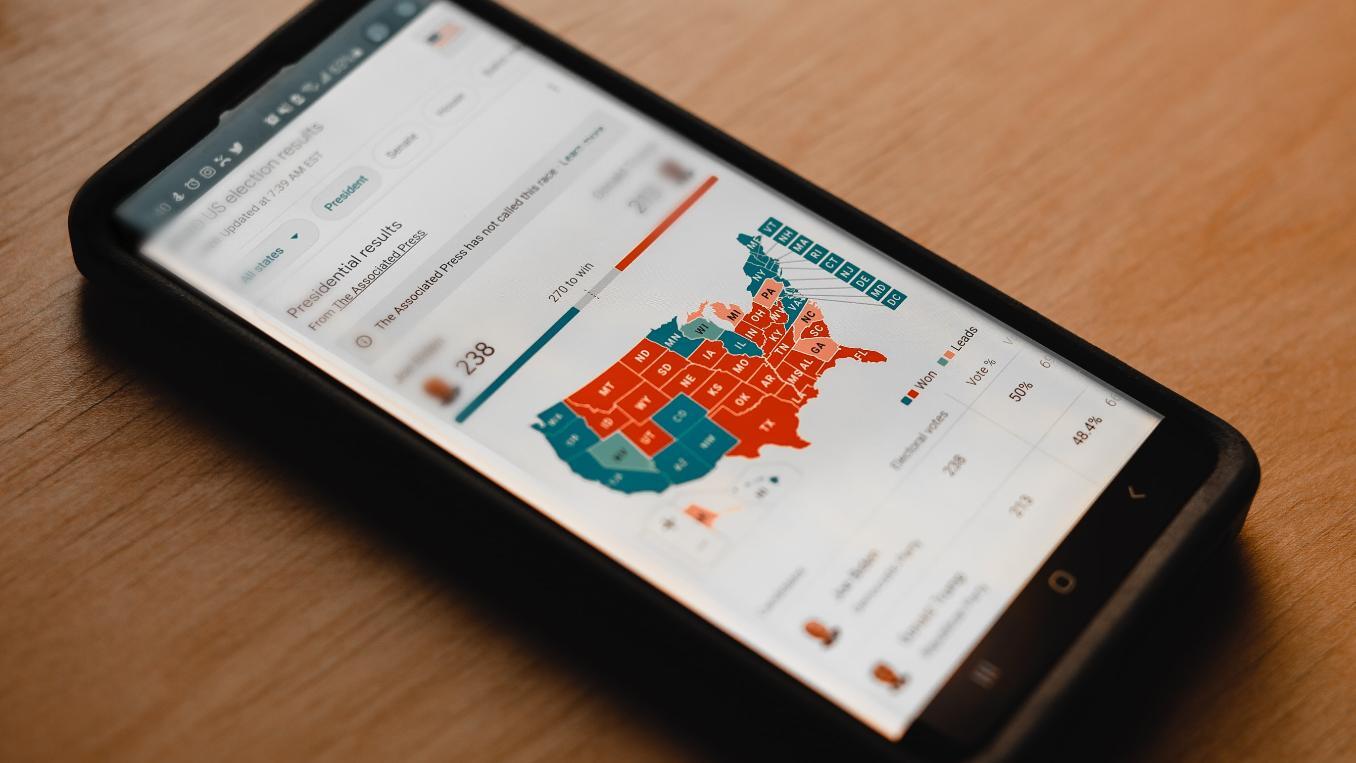

In 2016, election polls left many around the world expecting a Clinton victory that never came. The wrong calls grew from results that were largely within the margins of uncertainty both nationally and in the swing states, but pollsters were remorseful over the miss, even as much of the public remained shocked. That surprise has had some effect on the reputation of political polls, and some worry that perhaps this could morph into distrust of surveys. However, before local government stakeholders start to worry about their own citizen surveys, it’s useful to take a moment to understand how fundamentally different political polls are from local government surveys.

While surveys and polls are in the same class – like mammals –they are by no means the same species – e.g. dolphins vs. foxes. Citizen surveys collect evaluations of local government services and community quality of life; political polls predict voter turnout for incumbents or challengers.

Seven Ways Citizen Surveys are More Trustworthy than Political Polls

1. Methodology

The most substantive difference between political polls and citizen surveys resides in the different purposes of the two which results in fundamentally different methods. Citizen surveys deliver policy guidance, performance tracking and planning insights based on current resident sentiment. Polls use surveys to prophesy a future outcome. While the base information is the same, polls apply statistical models to “guess” which demographic groups will vote and in what amount. To emphasize the difference between survey results and poll conclusions, The New York Times gave the same survey results to four different pollsters and got four different predictions for presidential victor.

2. Social Desirability Bias

Political questions typically are burdened by strong emotional sway that influences respondents to give interviewers what respondents believe to be the socially acceptable response. This is why Trump did worse in telephone interview polls, but better when responses could be given with no interviewer involvement (e.g. in “Robo calls” or on the Web). In citizen surveys, the stakes are lower with no pressure to name an “acceptable” candidate. And if conducted using a self-administered questionnaire (mail or Web), citizen surveys avoid altogether the pressure for participants to inflate evaluations of community quality.

3. Gamesmanship

Political polls influence votes and must account for voter gamesmanship, but there are no such forces at play for citizen surveys seeking evaluations of city services and community quality of life. As elections draw near, those favoring third party candidates may change positions depending on the published poll results. For example, a supporter of the Libertarian candidate may decide at the last minute to vote for a main party candidate because polls show the two party race has tightened. Candidate changes may occur after the last survey is conducted, even for voters of the major parties. Citizen survey results often come just once every year or two, so there are no prior results that could shift a respondent’s choices and no winners to choose.

4. Participation

In political polls, some types of voters just won’t respond. Some analysts believe that, in the recent election, the enmity toward the establishment - government and media, including the polls – kept many Trump voters from participating in election surveys. When the most passionate group favoring one candidate doesn’t respond to election polls, the polls underestimate support for that candidate. In citizen surveys, those who don’t respond tend to be less involved in community. That’s not to say they have strongly different opinions about community than those more involved. Instead, life circumstances erode the priority for taking surveys among those non-responders.

5. Controversy

Political poll responses are driven by values that tend to be polarized in the U.S. Citizen surveys are about observed community quality, so residents are not motivated by doctrinaire perspectives that whiplash aggregate response depending on who participates. Those who participate in citizen surveys generally have similar perspectives to those who do not participate. So response rates, even if as low as polls, do not undermine the credibility of the citizen survey.

6. Response Rates

Response rates for most telephone polls are much lower than are response rates for citizen surveys conducted by mail. Typical phone response rates are about nine percent these days, but well conducted citizen survey response rates range from 20 percent - 30 percent.

7. Purpose

Political polls must pick winners and losers, and those declarations occur within a generally modest margin of uncertainty. To stir excitement, talking heads usually ignore error ranges to name a winner who may as likely be a loser because the race is so close. Citizen surveys aim public sector decision-makers at differences that are larger – differences that are relevant to policy decisions. For example, whether 15 percent or 25 percent of residents give good ratings to street repair, government action may be required. The same is true for differences in survey sentiments over time or compared to other places. Properly interpreted citizen survey results assist government leaders by steering them clear of small differences whereas the lifeblood of media polls is to make alps out of anthills.

Related Articles

- Do Election Seasons Hurt Citizen Survey Responses?

- Social Desirability Bias and Mode Effect

- Trump Your Inner Self

This is an updated article originally published in November of 2016.

Popular posts

Sign-up for Updates

You May Also Like

These Related Stories

Why Political Polling Is Often Wrong

Getting Started? Here’s How to Polco